An ETL job is composed of a transformation script, data sources, and data targets.ĪWS Glue also provides the necessary scheduling, alerting, and triggering features to run your jobs as part of a wider data processing workflow. ETL Job – The business logic that is required to perform data processing.Crawlers help automatically build your Data Catalog and keep it up-to-date as you get new data and as your data evolves. Crawler – Discovers your data and associated metadata from various data sources (source or target) such as S3, Amazon RDS, Amazon Redshift, and so on.They contain metadata they don’t contain data from a data store.

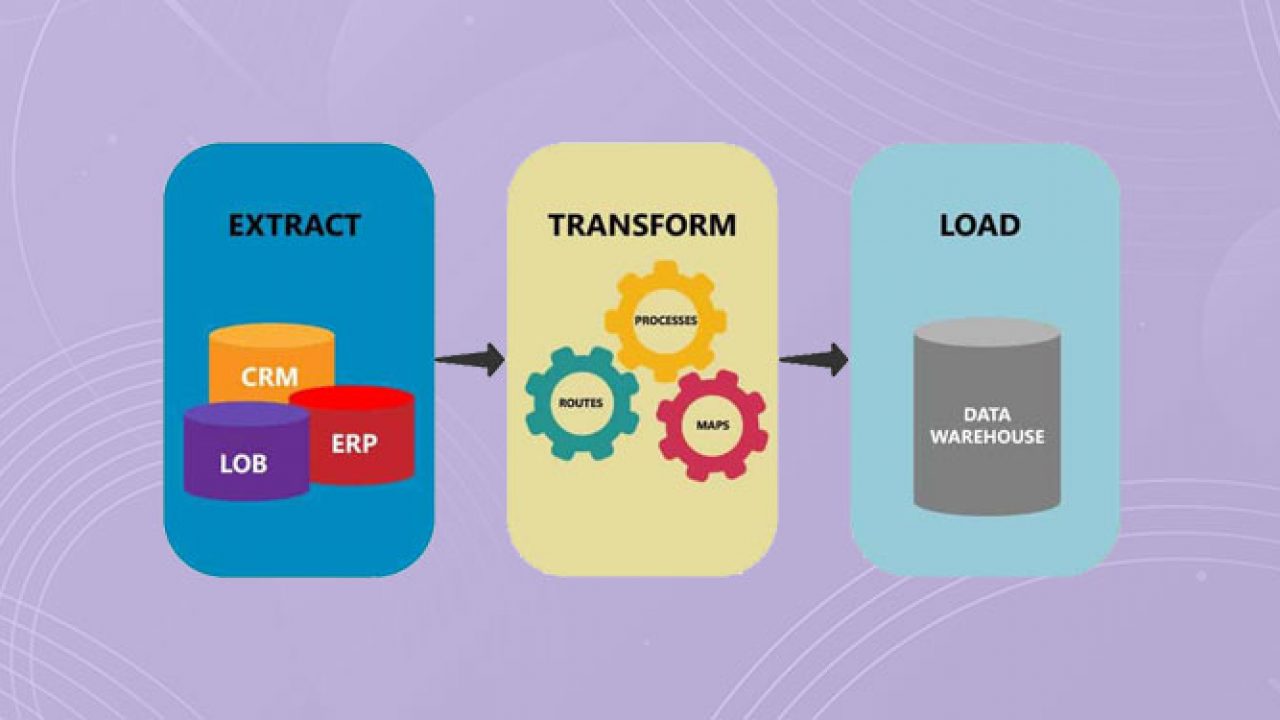

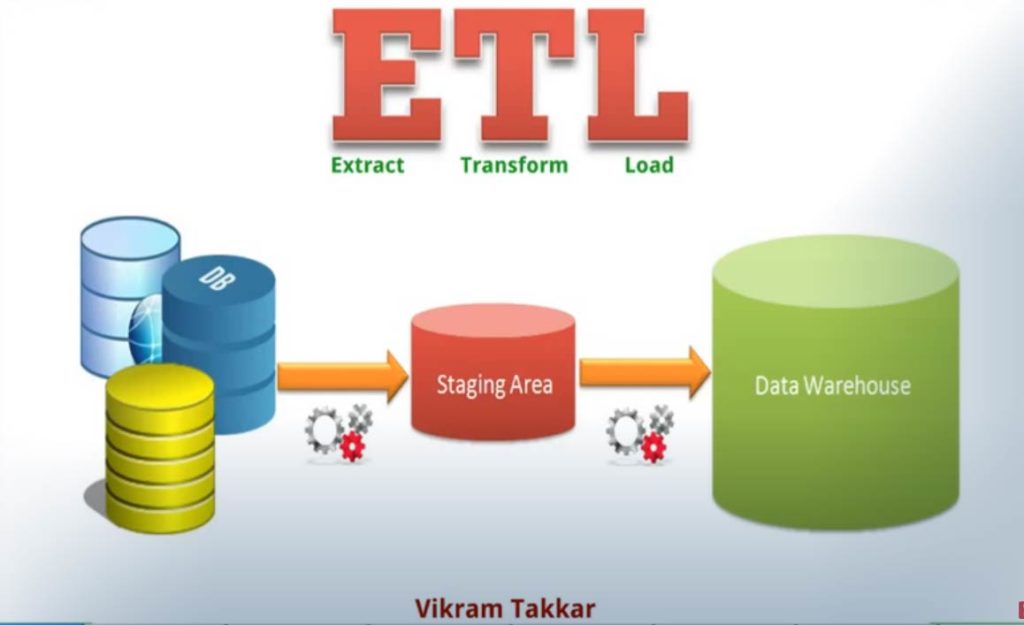

Tables and databases are objects in the AWS Glue Data Catalog. Data Catalog – Serves as the central metadata repository.What we provide you in this post is a framework to get started with AWS Glue and customize as needed. You can also use Amazon Redshift as a data target for building a data warehouse strategy. We also provide a scenario where we show you how to build a centralized data lake in Amazon S3 for easy querying and reporting by using Amazon Athena. We use Amazon Aurora MySQL as the source and Amazon Simple Storage Service (Amazon S3) as the target for AWS Glue. We use this stack to show you how AWS Glue extracts, transforms, and loads data to and from an Amazon Aurora MySQL database. In part 2 of this two-part migration blog series, we build an AWS CloudFormation stack. It’s a serverless, fully managed service built on top of the popular Apache Spark execution framework. This means that you don’t have to spend time hand-coding data flows.ĪWS Glue is designed to simplify the tasks of moving and transforming your datasets for analysis. AWS Glue automatically crawls your data sources, identifies data formats, and then suggests schemas and transformations. After the ETL jobs are built, maintaining them can be painful because data formats and schemas change frequently and new data sources need to be added all the time.ĪWS Glue automates much of the undifferentiated heavy lifting involved with discovering, categorizing, cleaning, enriching, and moving data, so you can spend more time analyzing your data. Traditional ETL tools are complex to use, and can take months to implement, test, and deploy. Without ETL it would be impossible to programmatically analyze heterogeneous data and derive business intelligence from it.One of the biggest challenges enterprises face is setting up and maintaining a reliable extract, transform, and load (ETL) process to extract value and insight from data. ETL takes data that is heterogeneous and makes it homogeneous. It would be great if data from all these sources had a compatible schema from the outset, but this is rarely the case.

When creating a data warehouse, it is common for data from disparate sources to be brought together in one place so that it can be analyzed for patterns and insights. Once loaded, the ETL process is complete, although in many organizations ETL is performed regularly in order to keep the data warehouse updated with the latest data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed